This is Part 2 of an article I published last week: Low-Latency Live Wireless Flight Video on all your devices (And YouTube Live Streaming it)

Now i'm on to using it with a Convolutional Neural Network.

Well......

Where are we at today. Some years ago, I remember clumsily mashing together my first remote controlled drone using an arduino and a lot of blind faith. Right now I want to say that I feel like it didn't really do much; but it did, and it does, because not only did it fly and stay in the air, it was able to follow gps co-ordinates and balanced itself against the wind, keeping itself in a single space in the sky. I thought and still do think that is quite a spectacular and amazing thing for humans to have achieved.

Of course, as time progresses, I got used to it doing all of that, and I decided to walk along the path a little more and try to educate myself in autonomous aviation. I learned to build gimbals, and photgrammetrically map routes that I had plotted my flying-arduino to fly. I experimented with sensors, writing code to teach me about many things from sonar sensing, to radiation activity levels. I even learned how to reverse engineer 32-bit micro controllers. I discovered what does work well, and what does not work so well at-this-period-in-time. I feel happy knowing that there are little elves in the background of life who are working on all these things and refining it all to ensure that next Christmas' toys will work perfectly.

I keep going along the autonomous aviation path, but I find myself having to take more frequent breaks from it all to keep my mind (relatively) sane and healthy - I have worked on gardens, and deviated towards electric ebike building. But I have always felt happy knowing that I will return to autonomous aviation to pick up again.

For a while I have found myself leaning towards wireless video communication. Trying to understand the complexities preventing and enabling us to have fast wireless video feeds with low-latency and good connectivity is a big task, there are some people here who each have differing ways of making this happen, all have varying approaches that have some shortcomings and also better implementations than others. I think an open standard will arrive eventually, but not without some internet bloodshed and flamewars going on.

In the meantime, I discovered recently a way to get a live flight video feed on all my screen devices which has enabled me to move forward quite comfortably towards new experiments such as Live Streaming my flight footage on YouTube, and this weekend I have been teaching myself how to run Convolutional Neural Networks (or CNNs) with my homebuilt live flight stream.

It seems to be becoming this year's buzzword - Neural Networks, Machine Learning, Artificial Intelligence, Deep Dreaming, Deep Learning, Training.

There are people desperate out there to champion themselves as an expert or leader of this emerging field, but it is quite contradictory isn't it, in such a fast evolving area of study how can anyone be an expert. We're all finding our way through the dark, it's just some who are adding "I am finding my way through the dark way better than you" to their resume. I have lots of respect for some inspiring people out there on the internet who do wonderful things and it allows me to have better judgement against others.

So, my forays into Convolutional Neural Networks (CNNs), I have thought about somethings to get me up and running as best I can from the get-go. It helps if you can address some things:

1) Hardware - Is it a good idea to use something cheap and less powerful, or something mighty but more expensive? Does it have to be 32-bit or 64-bit? Is it possible to use cloud processing over an internet connection, or does it have to be processed on the hardware locally/offline? Do your chosen peripheral devices work with the hardware?

2) Software - Is it at a stage where it is functional, and also is it functional on your chosen hardware? Will it compile without a blizzard of errors?

3) Time - Are you making good use of it, or are you wasting it trying to force your way to a result? IS it worth abandoning one hardware/software combination as a lost cause in order to try another setup which might bring better success.

Two systems I decided to use are my Raspberry Pi 2, and my Nvidia Jetson TK1. Both have limitations and a fair amount of compromise is required to get a CNN running on either device. I chose my Jetson as a first and obvious choice to begin.

CNN on Jetson TK1

What am I doing?

-On my Nvidia Jetson TK1 Ubuntu Board, I am running two shell scripts. The first one captures a photo image from the drone during its flight (By running the script, not automated or triggered yet).

-The second shell script takes that photo image and runs it through a convolutional neural network (CNN) trained to detect objects, the type of object, and the number of objects. For example, Horses, and the number of horses in a field.

For my experiments I knew that my TK1 board is considered an ageing device. But, I believe you must "do what you can, with what you have, where you are." and for me, tackling the problems of using an ageing hardware device to use in a fast-evolving field is not impossible, but an exercise in self-discovery.

Using the Jetson TK1 board, I was somewhat aware of it's limitation as a 32-bit board. Most CNNs are now developed for 64-bit platforms. An immediate hurdle as one tends not to compile well with the other. Perseverence in some code editing and file/folder renaming helped me to solve that problem, and I got my CNN running on my Jetson.

Initially, I ran my first tests using only the TK1 CPU due to not configuring the code properly. This meant that processing an image took up to 35 seconds.

(A drone image of horses I used with CNN..)

Setting the CNN up to run using CUDA gpu on the TK1 (Cuda 6.5), it is clearly much faster. Running the same image, it was processed in 0.8 seconds.

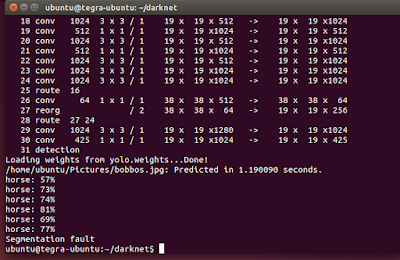

(CNN doing it's thing)

I think I will use GPU processing exclusively.... Or so I thought.

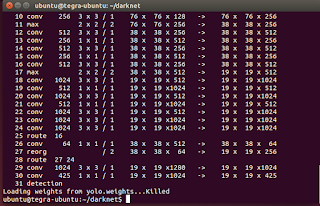

(Killed! Gah!)

(other images used)

One of the problems I face using the TK1 with an offline CNN designed for 64-bit is my limited memory availability on the TK1 board. I am constantly facing 'killed' when processing, and I believe this is due to memory limits.

My further experiments will be to try a cloud based CNN or 'Lite' version. However, I can confirm that I am able to capture live flight images from my drone in the sky, capture them on my Jetson TK1

with less than 0.1 millisecond latency, and run my CNN to process the image in under 20 seconds to give me a set of objects identified by the CNN within that image, and it is usually correct.

My goals are:

- To capture live stream video and live process that footage, but without memory limit errors.

- To run a 'take photo' script using a particular visual object source, then process it using the CNN.

- To have the Jetson TK1 perform a command upon detection of chosen object (for example, make LED lights flash, or tweet message, or make the drone do something)

Uses for this other than 'Military or Law Enforcement' - Talking with friends about this stuff, they laugh and call me a military facilitator. But they already probably use CNNs to help them detect threats, make decisions without waking up the General, and to detect civillians within target areas so as to avoid mistakes. But what other uses could this have for normal life?

- Mountain Rescue: Detecting people in Avalanche areas, or lost hill walkers.

- In London it could be used to count the number of houses in the area who use coal fires (heat/smoke identification & counting).

- The number of Diesel vehicles which are in poor condition (billowing excessive smoke)

- Detecting White Rhino Numbers in Chitwan National Park

- The number of tents in a field (Festival safety perhaps)

- Bird Nesting types & numbers

Thanks for reading, and I hope it gives you a little bit of inspiration.

http://dalybulge.blogspot.co.uk/2017/04/part-2-low-latency-live-wireless-flight.html

Comments

Live tracking from the drone camera using OpenCV to track a fruit object:

http://dalybulge.blogspot.co.uk/2017/05/part-3-low-latency-live-wir...

Capturing live from drone & detecting

Up and running capturing live footage at a so-so 6.2fps on the TK1

Hi Marc, I'm experienced with Raspberry Pi (All versions), using gstreamer on them for video transmission:

http://diydrones.com/group/companion_computers/forum/topics/compani...

I figure any linux SOC board that can run gstreamer will also work in the same way, and I figure you can mix and match boards, they don't have to be the same model as TX and RX boards.

I don't use any SOC (Pi or Beaglebone) type board as a flight controller as I have pixhawk & APM boards on all my drones controlling flight, but if you use a shield/hat board you can use pi or beaglebone as flight board and video board all in one. No one as yet has made a SD card image allowing just plug & play yet as far as I have seen.

I think for neural network processing of live flight footage at 20-30fps, we can forget about most small linux boards such as pi/beaglebone/jetson. I think for most people who do not wish to spend lots of money on a niche product for neural networks, then a high power laptop such as HP OMEN with Nvidia GTX 1080 graphics onboard, or a eGPU unit connected via laptop thunderbolt port will give buyers more use long term. Using the laptop as a ground station capturing video (low latency) is a good idea for hobby drones. I don't think drone mounted micro super computers is really necessary when you think about it; 0.1 second latency is fine in my opinion, and most tiny linux boards are not yet capable of real-time video neural network processing (yet). If you look at the Jetson TK1 it has 192 Cuda cores, TX1 & TX2 has 256 Cuda cores, a GTX 1080 laptop has 2560 Cuda cores!...And lets you write up your results too :)

Have you seen the Beaglebone blue? I have one. Do you think it has enough horsepower to do both video and FC?

Decent Laptop for CNN processing? Or Nuc with eGPU?

My Drone Vision Software automatically detected and Identified 22 Objects all by itself (in 1.2 seconds):

-

1

-

2

of 2 Next