It's been a long journey from the G-Buggy

to the failed balancer

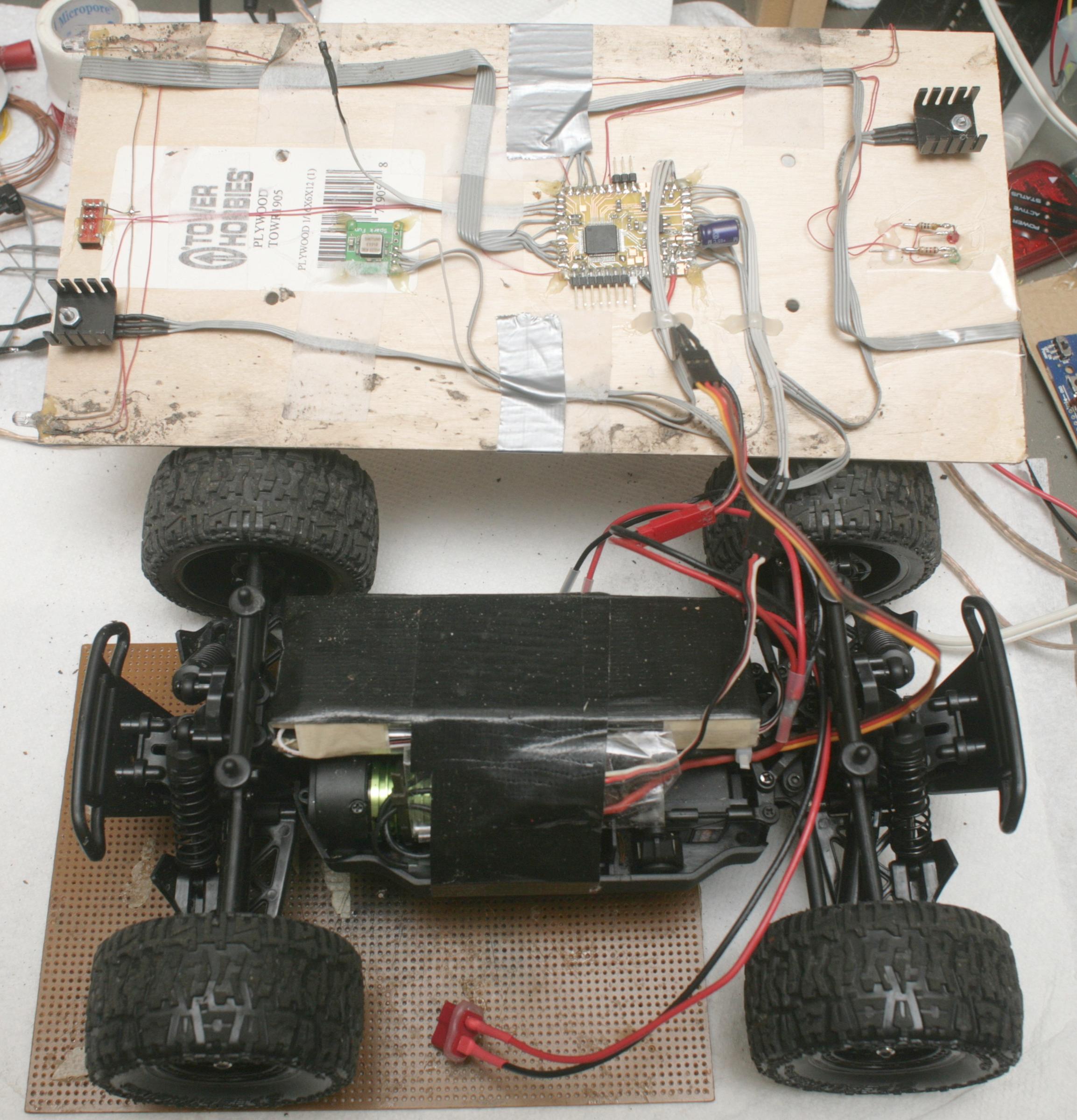

to the brushless rover.

All in the name of the cheapest, most compact, longest range vehicle which could go at running speed on the most terrain.

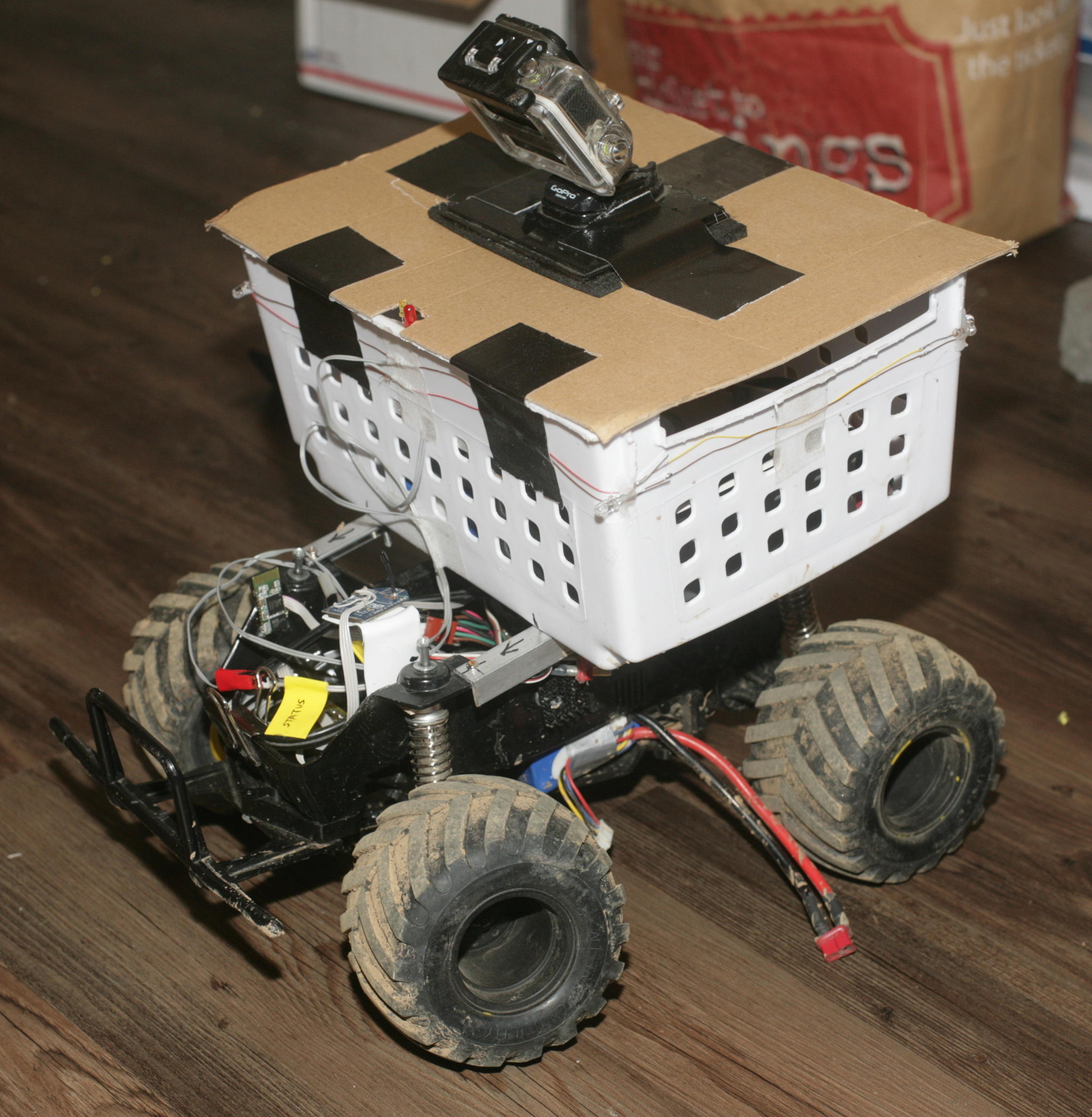

When money started flowing again in 2015, it was finally possible to build a useful platform: the Lunchbox

The lunchbox was ground up to support a 3S 5Ah battery & a brushless motor. This extended it to 14 miles on a new battery. The brushless motor allowed easy sensing of RPM by sensing a GPIO on the ESC. SimonK firmware allowed an old copter ESC to be repurposed for going forwards & backwards in the ground vehicle.

Attention had always been on making an athlete exert a constant power by having the vehicle develop a constant motor power. The problem is power into a motor doesn't equal power out of the motor, so every method to regulate the power of the motor by current sensing or constant voltage failed.

There is a way to do it by regulating RPM based on the incline sensed by an IMU, but this would take quite a bit of experimenting to get the right amount of human effort for every incline. Eventually, the best workout proved not to be a constant effort for all inclines, but random changes in effort manually programmed in based on feelings. A simple phone interface for dialing in a constant RPM proved to be the best solution.

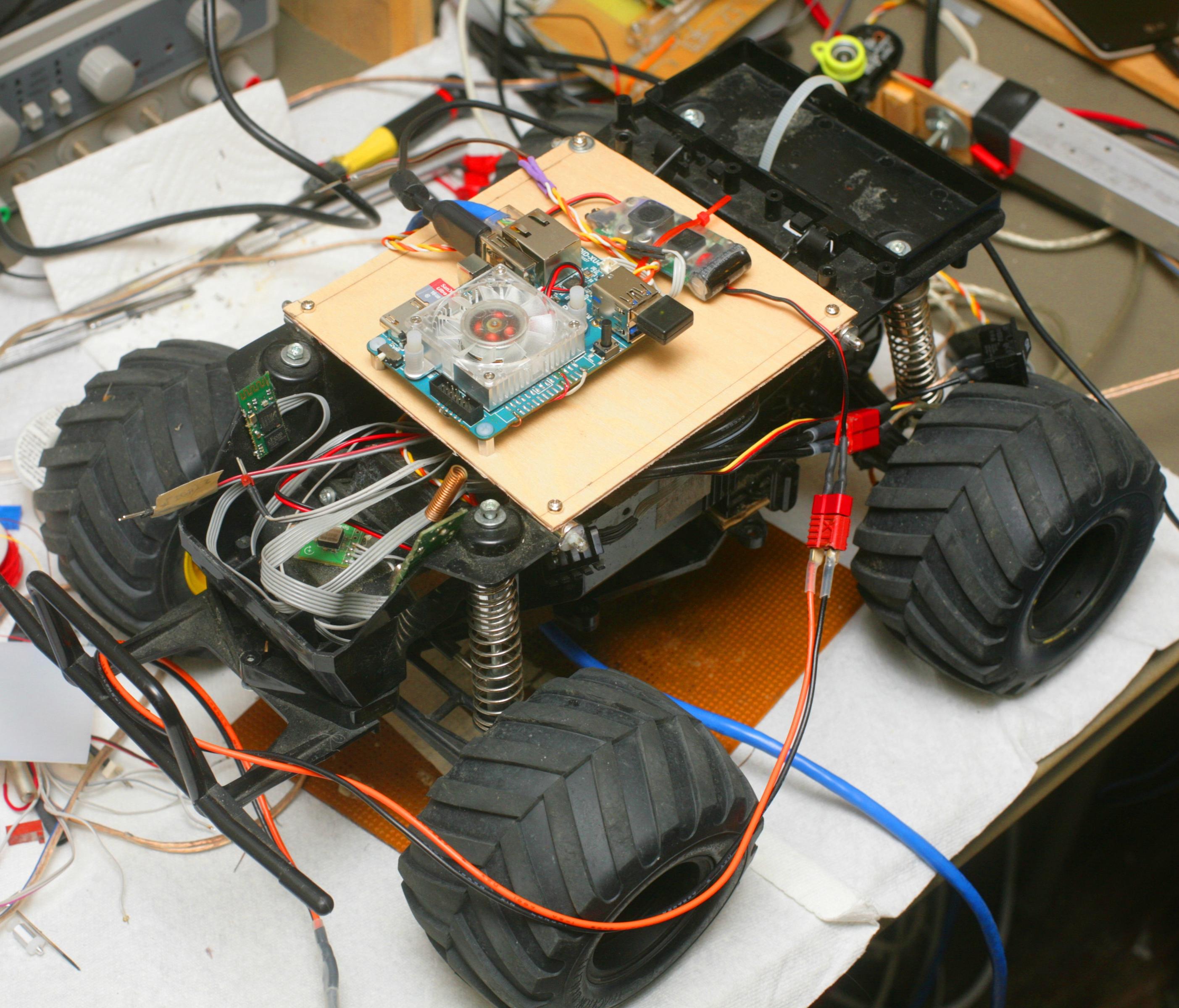

With the Lunchbox platform now capable of fitting a decent payload, attention turned to making it drive itself. This would allow photographing the athlete from multiple angles outside the athlete's field of view. This was a very difficult problem & put into perspective the last 12 years of hype around self driving cars which were always just around the corner.

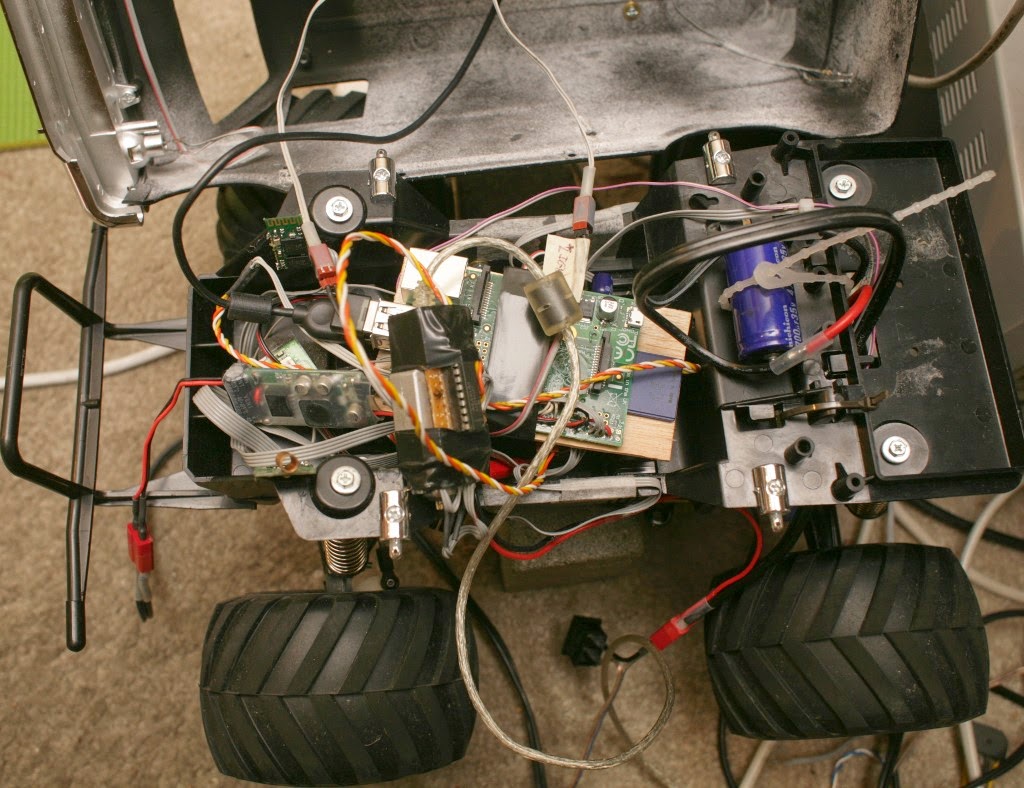

A raspberry pi 1.0 did the processing.

There was the side firing camera for tracking the side of the path.

Forward cam at high level.

There were many edge detection algorithms, chroma keying algorithms, variance sensing algorithms. Then an Odroid was installed, which provided a massive increase in computing power.

But while getting machine vision farther than it ever went before, it wasn't robust enough for practical use. There was a video of the peak of Odroid guided driving with some manual intervention.

Machine vision was deemed the best solution for a worker budget, not LIDAR, not sonar, not GPS. The mane limitation was shadows. It was easiest to semi autonomously control it.

The next step was to build a 2nd vehicle for the day job, while the lunchbox would be used at home, 50 miles away.

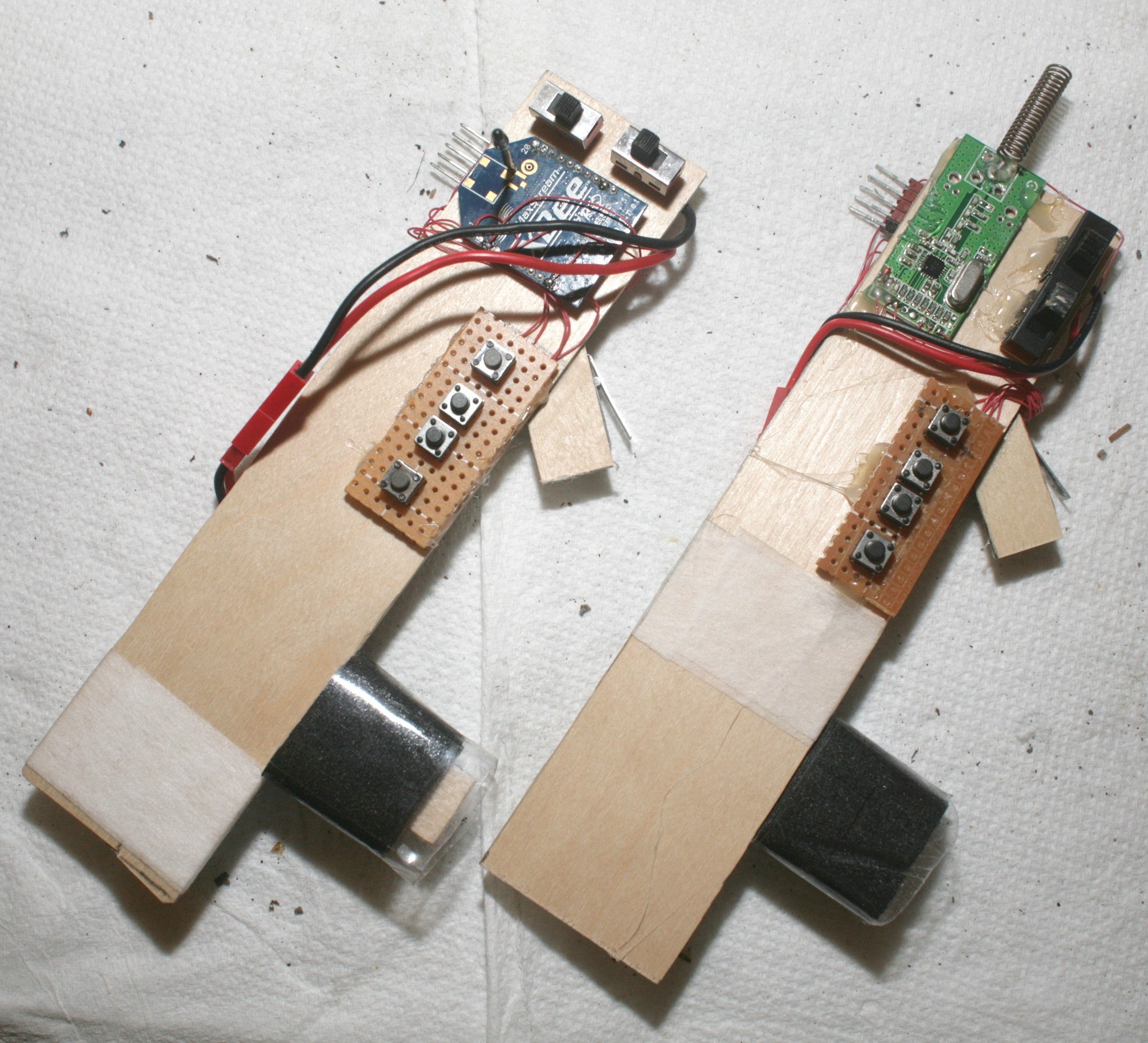

New semi autonomous controls were built for 2 vehicles. The lunchbox was modified for cargo.

An ECX Ruckus was converted into a 2nd semi autonomous vehicle.

An ECX Ruckus was converted into a 2nd semi autonomous vehicle.

The mane requirement for the Ruckus was to be narrow for navigating the city. This had a range of 18 miles on a new battery.

Even without autopilot, this vehicle was controllable enough from peripheral vision to record mostly side firing video.

For the 1st time, video feedback was available to an athlete without the need for a 2nd person to drive the robot. Unlike, quad copters, the ground robots could get close & navigate in the city. The robots could carry clothing. In exchange for reducing the range to 3 miles, the Lunchbox could carry water.

After 2 years, the establishment finally discovered the promise of robotic exercise coaches. Of course, their version needed official NASA & MIT employees.

Their focus was strictly on the autonomous driving. It uses a downward facing LED strip to follow a line. It only works on a track with a white line. They didn't seem to have discovered the ergonomic manual control, yet, nor did they aim a camera sideways for capturing profile videos.

Whether or not robotic pacing is an improvement over a mobile app depends on the athlete & whatever mobile app valuations Janet Yellen deems necessary to prevent a recession. Some athletes may benefit from the feedback of a physical object. Some may benefit from the feedback of a heartrate monitor. The robot edges out a treadmill in giving you contact with real pavement. It edges out a heartrate monitor in giving realtime feedback of your exact speed in relation to the desired speed. Only the robot can carry cargo. Being able to carry all your belongings has been a huge benefit with the robot. The mane limitations are even with semi autonomous control, it's a pain to constantly have to trim the heading & it can't drive on trails.

A few months of nothing but robotic pacing got a fake test pilot a PR in the half marathon, by the widest margin of any PR. Who knows if that would have happened without robotic pacing or if it was new shoes.

The mane problem is now the inability to drive on trails. There is a vehicle which can get around on trails, but only if the $500 billion company which bought it out ever discovers it can be used for training athletes.

Comments

@ Jack: Well done!

For increased autonomy you could try supervised deep learning, you may be happily surprised ...

End to End Learning for Self-Driving Cars. And there is this now.

For trails on a budget, hmm, there's always the aerial route with a GPS in your pocket, although it could be dicey under trees. Or this: A Machine Learning Approach to the Visual Perception of Forest Trails

@chris, I'm thinking yours would be better in front with a tow rope. Running a marathon would be so much easier!

And I was so happy about the size of my new XMAXX ):

I forgot you had the Tim Allen version.

If you set that up as a pace setter it would offer real incentive, especially if you put it behind the runner.

I think I'll hook up my Kubota backhoe.

Best,

Gary

@ Gary: Rover might not be the word ;-) Carl Bass and I are competing in this.

@ Chris,

How about some info on your rover, just getting ready to go down that road with my new XMAXX and Hokuyo Scanner and an Erle2 Brain myself.

Best regards,

Gary

Fantastic Jack,

Haven't heard much from you in the last few years, now this.

As usual, making a silk purse out of a sows ear and way ahead of the rest of us.

You really are my Hero and no GoPro jokes allowed.

I'm sure the ex Boston Dynamics, currently Google mule robot thingy is not beyond your reach.

I can't wait to see how you make a better one for a thousand dollars.

The Very Best,

Gary

Love this! Did you ever post your machine vision code? I'd love to try the same thing on my Erle2 Brain-powered rover, which has similar processing power to the Odroid.