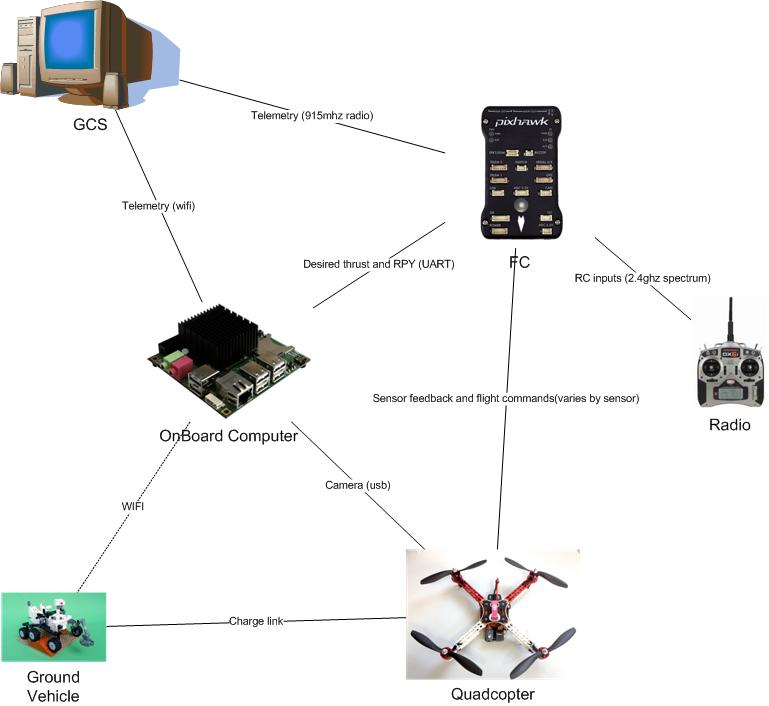

My system attaches to the bottom of current apm systems and allows them to land on a user defined target (example targets provided) and when equipped with the supplementary electronics allows for recharging

This means that the copter is now capable of performing multiple missions and if combined with a ground vehicle it can be used as an extension of the ground robots capabilities thus creating a multi robot system

Example applications are

-surveillance

multiple copters watching an area

-environmental surveying

Can do mapping or remote sensing over a longer period due to recharge

if with agv it can move around the environment and perform a multitude of missions

-extending current agv operations

Aerial mapping / complimentary sensors

-improved aerial photography

capable of tracking a target

DETAILS

The cheap small GPS used on hobby UAVS are only accurate to +-5m and traditional landing systems simply move the copter to a predefined GPS location and slowly lower the craft hoping that there is nothing in the way and that the position is where you started. This is nowhere near accurate enough to allow the copters to dock and thus recharge themselves. Normally a human operator is required to move the copter from the landing site to a place where it can be recharged. This limits mission operation and requires a human operator to be physically present.

Utilizing recent advances in low power high performance computing and computer vision techniques my system will allow the copter to dock, recharge itself and when finished fly off again and continue its mission thus partially compensating for the poor endurance of multirotors.

Todo

- Design testing platform [DONE]

- Build testing platform [DONE]

- Test flightworthyness of platform [DONE]

- Design recharging electronics

- Design vision software for landing [DONE]

- Test integration of vision system with paltform

COMPONENTS

- 1×Hexacopter

- 1×Odroid u3 computer

- 1×Pixhawk autopilot

- 1×Usb webcam

Simulation of autonomous landing

13 days ago • 0 comments

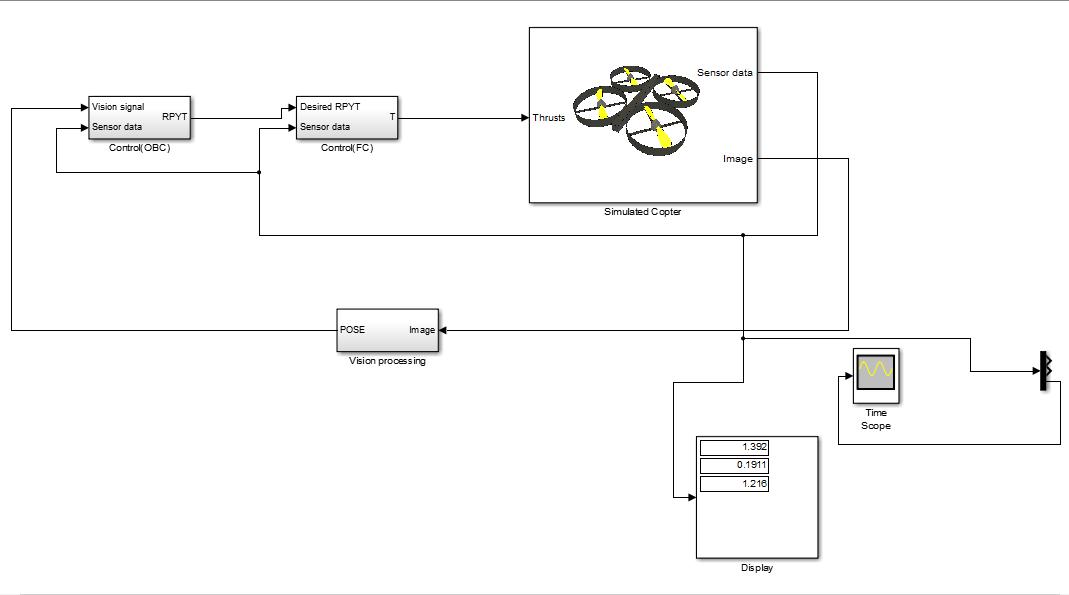

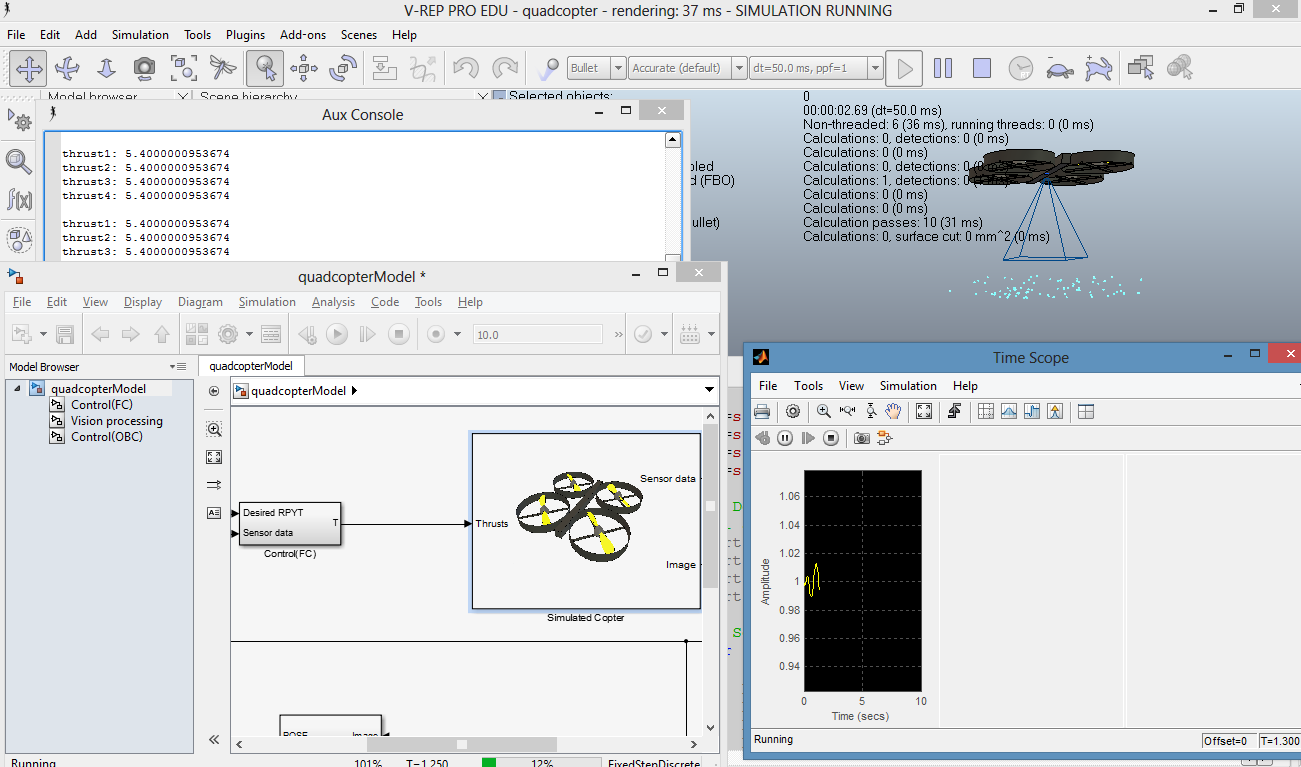

Using the free vrep simulation environment and my access to matlab and simulink via the university the simulation model of the copter has been developed and I can work on designing vision based control algorithms without the risk of crashing copters.

Comments

great!

Sunlight is the main wildcard in outdoor visual landing systems such as this. At any given time of day, depending on weather, there's a variable swath of the sky from which the drone could not approach the landing target, as it will be blinded by reflections from the target. If the sun is just a little too dim, hidden behind clouds, the drone is hue-blind as well. (You could try using IR beacons as in the IR-LOCK project, but those tend to get lost in full sun, and it's difficult to distinguish individual LED point sources from eg automobile chrome reflections.) There's also a big difference between starting with a clean direct overhead view of the target and laboriously finding it starting with +/- 4m horizontal GPS error.

It seems like multiple teams are getting close to a solution, but until someone demos multiple precision landings on an outdoor target in variable daylight conditions, the job isn't really done. (Same could be said of the similar follow-me camera problem, but Kickstarter backers are willing to back something that is less than half baked.)

@Jack, according to his checklist, no.

Very cool. Any video of it working outside of simulation?

Has it autonomously recharged?

is there link to the code/github somewhere?

Really cool :)